Systems Thinking

So important yet so rare

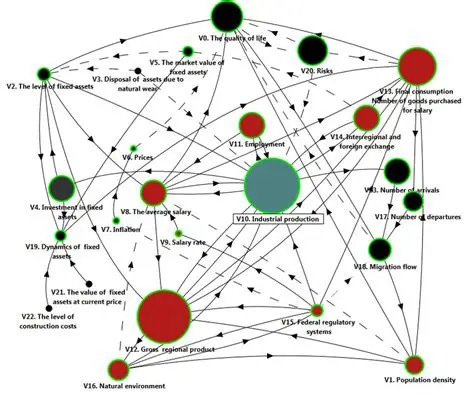

I’ve read a number of definitions and descriptions of systems thinking, all of them confusing. While it may be that the reason we don’t see more systems thinking in practice is because it is so poorly understood, it’s more likely that its avoidance is because it’s hard to do. The essence of systems thinking is seeing the connections among things. That’s easier said than done because you could argue that ultimately, everything is connected to everything else and where do you draw the line? And really, how many connections can you hold in your brain simultaneously? If we didn’t simplify our view of reality, we might never act out of fear that we’re missing an important downstream consequence that we should have taken into account. See, for example, this diagram of connections among factors affecting investments in capital. While it’s great for understanding why investment decisions are complicated, how useful is it as a guide to action?

In practice, we rely on our ability to react to both the expected and unexpected effects of our actions as a stand in for systems thinking. If something goes wrong, we’ll fix it, or at least so we tell ourselves. Yet sometimes, our actions trigger outcomes we can neither foresee nor correct and we pay the price. For example, we introduce a new species to control the devastation caused by a known parasite and soon find that the new species has become a threat of its own. Or we cut prices to drive out a competitor which forces the competitor to innovate their business model in ways that they would never have considered otherwise, resulting in a disastrous drop in our sales.

Still, systems thinking is important because it encourages us to look before we leap, perhaps taking some precautionary steps before we go boldly where no one has gone before. We shouldn’t concern ourselves with every possible outcome our actions could produce but we can at least give some thought to the obvious ones.

Consider Apple. If there was ever a company driven by innovation and product launches, Apple is it. If you live in Apple, it’s easy to buy into the notion that your product development prowess will continue to allow you to lead the smartphone market for the foreseeable future. You keep adding new features and capabilities to phones no matter the cost to the consumer. There’s plenty of evidence to support your strategy, as your phones remain best sellers. Because you aren’t that concerned about the competition, you don’t invoke systems thinking to project the responses of your rivals and you may downplay any progress they are making.

The reality is that Apple is no longer #1 in global smartphone sales. That honor goes to Samsung, which offers a wider lineup including lower-priced phones that appeal to a global audience without the spending power of wealthy economy buyers. Apple is still doing fine, thank you but that could change if consumers find that Samsung phones do everything they need at a fraction of the cost. For now, the “cool factor” of Apple’s brand is still attracting enough buyers to keep their models in first place as individual sellers. But, put enough Samsung Galaxy phones into the market and then add in the right advertising and things could change. The “accessible luxury” trend (cheaper products that are just as good) is real and with a worsening economy, likely to accelerate. Systems thinkers would put these facts together and be developing strategies to defend against a high-quality, low-cost wave of competition. Because coming out with a “cheap” phone would not be compatible with Apple’s brand, Apple might have to compensate for lost phone sales by investing more in its entertainment arm, for example. For that strategy to work, Apple would need to use systems thinking to explore what factors will affect the profitability of the entertainment industry as the key players expand their influence and then determine what Apple could do to carve out a defendable position.

Many strategic planning frameworks feature some version of creating “what if” scenarios to overcome insular thinking. The problem with these exercises is not that leaders are incapable of identifying threats to their current position; it’s that they don’t take those threats seriously enough. Their presumption is that the chances of the threats materializing are small so they can be acknowledged but not acted upon. Leaders are capable of systems thinking but they don’t see the real value in it. Often, it is only looking backward to find what was missed in a strategic plan that failed to account for the moves of competitors that systems thinking is called upon.

Leaders can’t be expected to turn over every rock when looking for threats or opportunities that are not obvious or likely to occur. What leaders should be expected to do is to take emerging trends seriously rather than waiting for their full effects to be felt. Currently, one of those threats or opportunities is artificial intelligence. Survey after survey continues to point out how uniformed the majority of senior executives are about the probable implications of AI for their companies. However, one news reporter said that when people at the recent Davos conference weren’t talking about Trump, 90 percent of the conversation was about AI. That’s a good sign that people are waking up. The challenge is keeping up, as AI’s capabilities are growing daily. It would be a really good idea for senior executives to call upon AI experts to help them develop a company-specific systems thinking view of the factors that will affect AI deployment in their organization, so that they know which levers they must pull to increase the effectiveness of their investments in AI as the future of AI applications becomes clearer.

Is systems thinking valuable? Only if we don’t want to find ourselves saying in hindsight, “We should have thought of that!” when it really matters.

I think the reason “systems thinking” stays confusing is because people treat it as an attempt to see everything.

But across every domain (biology, business, engineering, humans), the same pattern repeats:

You don’t need more connections — you need the right invariants:

• constraints (what cannot be violated),

• feedback (what tells you what’s real),

• boundaries (what you’re actually modelling),

• recovery (what you do when you’re wrong).

Without those, “everything is connected” becomes either a diagram… or a justification to do nothing.

With them, systems thinking becomes simple:

act small, stay reversible, and keep reality in the loop.